Globally‚ billions of dollars are lost each year to electricity theft and related losses. This phenomenon‚ also known as non-technical loss (NTL) of energy‚ can appear in many forms (bypassing meters‚ tampering‚ unpaid bills‚ etc.). The consequence? A less reliable electricity grid and higher costs for all customers.

The advent of advanced metering infrastructure (AMI) in recent years‚ corresponding to an abundance of available data‚ has made it possible to accurately identify and reduce NTL. More specifically‚ using predictive analytics‚ machine learning (ML)‚ and artificial intelligence (AI)‚ utilities are able to analyze massive data sets from various sources (electricity consumption‚ meter events‚ work orders‚ contract information‚ weather‚ etc.) to detect NTL with high precision.

C3.ai has been at the forefront of solving this problem for many years‚ deploying production ML algorithms to utilities across the United States and Europe to detect and reduce NTL. These algorithms represent nine years and $300 million of investment in data analysis‚ feature engineering‚ model building‚ and algorithm design.

The Application of Deep Learning

Deep artificial neural networks (DNN) have gained much attention in recent years owing to success in image‚ speech‚ and natural language processing. DNNs provide an immensely rich class of hypothesis functions from which the model is chosen by optimizing an appropriate loss function. One of the major advantages of DNN models is the elimination of extensive feature engineering‚ which is a major component of traditional ML algorithms. Neural networks are capable of learning both the output and the relevant features. However‚ they usually require a massive amount of training data and are computationally very expensive. This is where graphical processing units (GPU) have proven crucial for the success of DNNs.

Recently‚ the data science team at C3.ai implemented a DNN algorithm for NTL detection using mostly raw data. This DNN algorithm is a stacked bi-directional long short-term memory (LSTM) model with attention‚ previously studied in the literature in the context of natural language processing and machine translation‚ and is trained using NVIDIA GPUs.

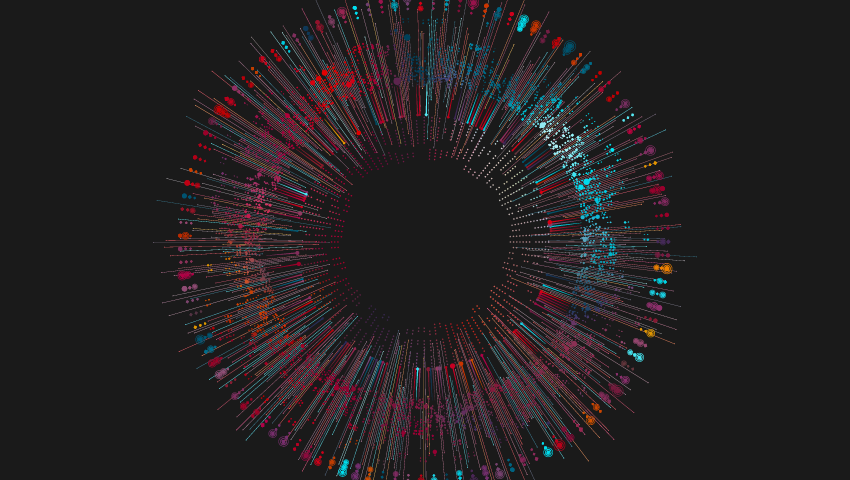

The C3.ai team’s DNN model demonstrated greater accuracy than a traditional model on a test set (see Figure 1). But more importantly‚ the development pace for DNN was significantly faster than the traditional ML model. With feature engineering effort minimized‚ C3.ai was able to achieve similar results using a DNN model in 1/5 of the time. Currently‚ results of the DNN model are being evaluated via field inspections to provide a concrete assessment of the model’s performance.

Figure 1: Hit rate (precision) vs. rank (score relative to the general population) for DNN and a traditional model.

Based on the results‚ C3.ai believe DNNs will find applicability in addressing an emerging‚ broad class of industrial IoT problems that involve a combination of sources such as time series and demographic data. The use cases‚ which have significant global economic impact‚ include fraud detection‚ predictive maintenance‚ and supply network management.