- AI Software

- C3 AI Applications

- C3 AI Applications Overview

- C3 AI Anti-Money Laundering

- C3 AI Cash Management

- C3 AI Contested Logistics

- C3 AI CRM

- C3 AI Decision Advantage

- C3 AI Demand Forecasting

- C3 AI Energy Management

- C3 AI ESG

- C3 AI Health

- C3 AI Intelligence Analysis

- C3 AI Inventory Optimization

- C3 AI Process Optimization

- C3 AI Production Schedule Optimization

- C3 AI Property Appraisal

- C3 AI Readiness

- C3 AI Reliability

- C3 AI Smart Lending

- C3 AI Supply Network Risk

- C3 AI Turnaround Optimization

- C3 Generative AI Constituent Services

- C3 Law Enforcement

- C3 Agentic AI Platform

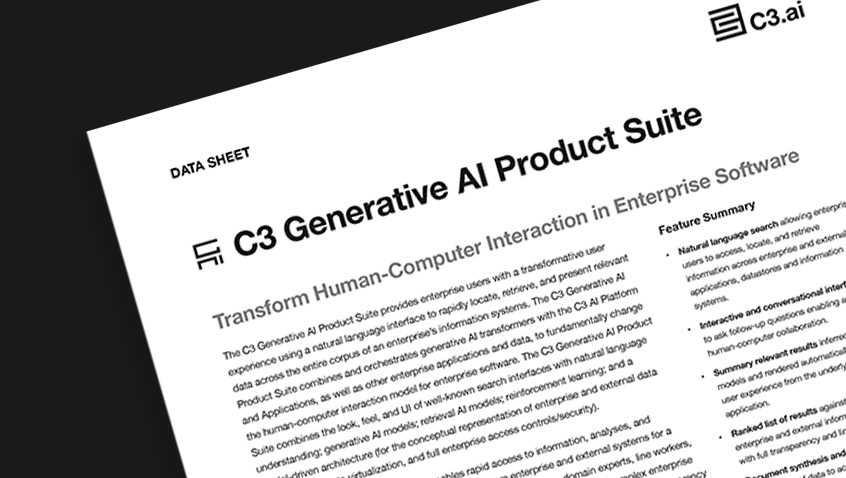

- C3 Generative AI

- Get Started with a C3 AI Pilot

- Industries

- Customers

- Events

- Resources

- Generative AI for Business

- Generative AI for Business

- C3 Generative AI: How Is It Unique?

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can the Generative AI Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- What Is Enterprise AI?

- Machine Learning

- Introduction

- What is Machine Learning?

- Tuning a Machine Learning Model

- Evaluating Model Performance

- Runtimes and Compute Requirements

- Selecting the Right AI/ML Problems

- Best Practices in Prototyping

- Best Practices in Ongoing Operations

- Building a Strong Team

- About the Author

- References

- Download eBook

- All Resources

- Publications

- Customer Viewpoints

- Blog

- Glossary

- Developer Portal

- Generative AI for Business

- News

- Company

- Contact Us

- search Search

- Generative AI for Business

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can the Generative AI Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- C3 Generative AI: Getting the Most Out of Enterprise Data

- The Key to Generative AI Adoption: ‘Trusted, Reliable, Safe Answers’

- Generative AI in Healthcare: The Opportunity for Medical Device Manufacturers

- Generative AI in Healthcare: The End of Administrative Burdens for Workers

- Generative AI for the Department of Defense: The Power of Instant Insights

- C3 AI’s Generative AI Journey

- What Makes C3 Generative AI Unique

- How C3 Generative AI Is Transforming Businesses

Generative AI and the Future of Business

Generative AI Terms and Their Definitions

Before we dive into the benefits of generative AI for the enterprise, it’s important to understand the key terms in this field:

- Natural Language Processing (NLP): A branch of AI that enables a computer to understand, analyze, and generate human language.

- Transformer: A neural network architecture (introduced by Google in 2017) that can train significantly larger models on ever larger datasets than before. It allowed the emergence of recent LLMs to process and learn from sequences of words, recognizing patterns and relationships within the text.

- Large Language Model (LLM): A deep learning model specialized in text generation. Today almost all LLMs are based on the transformer architecture (with variations from the original). As the name indicates, the real revolution is in the scale of these models, the very large number of parameters (typically in the 10s to 100s of billions), and the very large corpus of text used to train them.

- Examples of commercial / closed-source models: OpenAI’s GPT3/3.5/4, GCP’s PaLM, AWS’s Bedrock, Anthropic’s Claude, etc.

- Examples of open-source models: Google FLAN-T5 & FLAN-UL2, MPT-7B, Falcon, etc.

- Pre-trained Model / Foundational Model: An LLM trained from scratch in a self-supervised way (without labels) on a very large dataset and made available for others to use. Foundational models are not intended to be used directly and need to be fine-tuned for specific tasks.

- Fine-tuning: The process of taking a pre-trained model and adapting it to a specific task (summarization, text generation, question-answering, etc.) by training it in a supervised way (with labels). Fine-tuning requires much less data and compute power than the original pre-training. Well fine-tuned models often outperform much larger models.

- Hallucination: A situation where the LLM generates a wrong output; the root cause is that the LLM uses its internal “knowledge” (what it has been trained on) and that knowledge is not applicable to the user query.

- Multi-modal AI: a type of AI with the ability to process and understand inputs from different types of inputs such as text, speech, images, and videos.

- Retrieval Model: a system (typically a Transformer) used to retrieve data from a source of information. Combining retrieval models with large language models partially addresses the hallucination problem by anchoring the LLM in a known corpus of data.

- Vector Store: a type of data store specialized in managing vectorial representations of documents (known as embeddings), those stores are optimized to efficiently find nearest neighbors (according to various distance metrics), they are central architectural pieces of a Generative AI platform.

- Enterprise AI: Providing predictive insights to expert users for improving performance of enterprise processes and systems. (See What Is Enterprise AI?)

- Generative AI for Enterprise Search – Leveraging generative AI’s intuitive search bar to access the predictive insights and underlying data of enterprise AI applications, improving decision-making for all enterprise users.