- AI Software

- C3 AI Applications

- C3 AI Applications Overview

- C3 AI Anti-Money Laundering

- C3 AI Cash Management

- C3 AI Contested Logistics

- C3 AI CRM

- C3 AI Decision Advantage

- C3 AI Demand Forecasting

- C3 AI Energy Management

- C3 AI ESG

- C3 AI Health

- C3 AI Intelligence Analysis

- C3 AI Inventory Optimization

- C3 AI Process Optimization

- C3 AI Production Schedule Optimization

- C3 AI Property Appraisal

- C3 AI Readiness

- C3 AI Reliability

- C3 AI Smart Lending

- C3 AI Supply Network Risk

- C3 AI Turnaround Optimization

- C3 Generative AI Constituent Services

- C3 Law Enforcement

- C3 Agentic AI Platform

- C3 Generative AI

- Get Started with a C3 AI Pilot

- Industries

- Customers

- Events

- Resources

- Generative AI for Business

- Generative AI for Business

- C3 Generative AI: How Is It Unique?

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can the Generative AI Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- What Is Enterprise AI?

- Machine Learning

- Introduction

- What is Machine Learning?

- Tuning a Machine Learning Model

- Evaluating Model Performance

- Runtimes and Compute Requirements

- Selecting the Right AI/ML Problems

- Best Practices in Prototyping

- Best Practices in Ongoing Operations

- Building a Strong Team

- About the Author

- References

- Download eBook

- All Resources

- Publications

- Customer Viewpoints

- Blog

- Glossary

- Developer Portal

- Generative AI for Business

- News

- Company

- Contact Us

- search Search

- Generative AI for Business

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can the Generative AI Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- C3 Generative AI: Getting the Most Out of Enterprise Data

- The Key to Generative AI Adoption: ‘Trusted, Reliable, Safe Answers’

- Generative AI in Healthcare: The Opportunity for Medical Device Manufacturers

- Generative AI in Healthcare: The End of Administrative Burdens for Workers

- Generative AI for the Department of Defense: The Power of Instant Insights

- C3 AI’s Generative AI Journey

- What Makes C3 Generative AI Unique

- How C3 Generative AI Is Transforming Businesses

What To Consider When Using Generative AI

Employees in offices everywhere are relying on generative AI tools as a one-size-fits-all way to streamline work-related tasks. Whether expediting routine chores, like generating emails and meeting notes, or assisting in job-specific tasks — drafting marketing materials, crafting job posts, debugging code, conducting data analysis — these AI tools have simplified workstreams across industries.

They have also led to a range of problems that professionals must consider when determining appropriate uses of consumer generative AI tools.

First, there’s the matter of security. What people who use commercial generative AI tools likely don’t realize is that any time they enter a query into a large language model (LLM), the information gets stored on an external server. That can put your IP and other sensitive information at risk, a problem known as LLM-caused leakage.

This issue led Samsung to ban its employees from using OpenAI’s ChatGPT at the office. Employees inadvertently leaked confidential information on three occasions: Two instances involved employees entering sensitive code into ChatGPT; the third leak arose from a recording of a meeting uploaded to ChatGPT to generate notes. Other companies, including Apple, Amazon, Bank of America, Goldman Sachs, and JPMorgan Chase, also have restricted use of the tool.

OpenAI has taken steps to address these concerns, such as letting users opt-out of saving their chat history. However, OpenAI still saves chats for 30 days to monitor for abuse. So if you’re using generative AI tools, be mindful of the sensitivity of the work you’re asking it to assist with.

The next big consideration is accuracy. Although LLMs sound authoritative, churning out polished answers in a flash, they frequently generate false information. This phenomenon is known as hallucination, and because LLMs have no off switch, they will always provide an answer even though they don’t know if it is correct. This has led to some well-publicized mistakes: In a lawsuit against Avianca airlines, an attorney used ChatGPT to generate cases for his brief — cases that never existed.

These privacy and accuracy concerns led the U.S. Federal Trade Commission (FTC) in July to open an investigation into OpenAI. The FTC is looking for answers on how OpenAI handles users’ data and how it plans to mitigate the LLM’s habit of hallucinating harmful information about real people.

Yet enterprises shouldn’t dismiss generative AI because of these concerns. In the past, people worried about how smartphones, social media platforms, and chat apps would harm businesses, all of which evolved into useful workplace technologies. To some degree, generative AI tools are the next installment in the line of consumer-turned-workplace tools. But whenever adopting consumer generative AI tools, enterprises should consider their limitations. When asked, “What should I not use ChatGPT for?” the LLM answered by discouraging its involvement in making financial decisions and handling sensitive information — suggestions many workplace professionals have ignored.

In these early days of generative AI, there have been plenty of missteps. But for help with memos, say, or synthesizing non-confidential notes, consumer generative AI tools will continue to be useful.

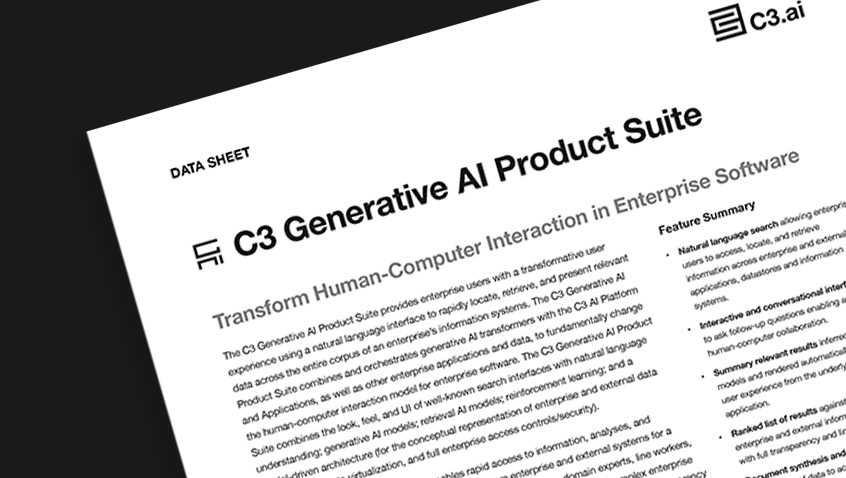

For enterprises to adapt generative AI in a way that’s safe, accurate, and transparent, they need to find a technology provider with deep expertise in modeling and analyzing enterprise data, like C3 AI. By unifying disparate data from across multiple enterprise applications and data lakes, the C3 Generative AI solution provides business users with relevant, trustworthy, and reliable answers, free of hallucinations. Because C3 Generative AI is designed to handle sensitive data and operate in secure, air-gapped, private cloud environments, users can generate enterprise-specific responses without fear of LLM-caused leakage of sensitive information.

In the early days of the web, companies struggled to figure out what should be allowable for employees to do from office computers, both from a productivity and security standpoint. A generation later, that issue still exists, albeit on a far more sophisticated level.

Product Suite