- AI Software

- C3 AI Applications

- C3 AI Applications Overview

- C3 AI Anti-Money Laundering

- C3 AI Cash Management

- C3 AI Contested Logistics

- C3 AI CRM

- C3 AI Decision Advantage

- C3 AI Demand Forecasting

- C3 AI Energy Management

- C3 AI ESG

- C3 AI Health

- C3 AI Intelligence Analysis

- C3 AI Inventory Optimization

- C3 AI Process Optimization

- C3 AI Production Schedule Optimization

- C3 AI Property Appraisal

- C3 AI Readiness

- C3 AI Reliability

- C3 AI Smart Lending

- C3 AI Supply Network Risk

- C3 AI Turnaround Optimization

- C3 Generative AI Constituent Services

- C3 Law Enforcement

- C3 Agentic AI Platform

- C3 Generative AI

- Get Started with a C3 AI Pilot

- Industries

- Customers

- Events

- Resources

- Generative AI for Business

- Generative AI for Business

- C3 Generative AI: How Is It Unique?

- Reimagining the Enterprise with AI

- What To Consider When Using Generative AI

- Why Generative AI Is ‘Like the Internet Circa 1996’

- Can the Generative AI Hallucination Problem be Overcome?

- Transforming Healthcare Operations with Generative AI

- Data Avalanche to Strategic Advantage: Generative AI in Supply Chains

- Supply Chains for a Dangerous World: ‘Flexible, Resilient, Powered by AI’

- LLMs Pose Major Security Risks, Serving As ‘Attack Vectors’

- What Is Enterprise AI?

- Machine Learning

- Introduction

- What is Machine Learning?

- Tuning a Machine Learning Model

- Evaluating Model Performance

- Runtimes and Compute Requirements

- Selecting the Right AI/ML Problems

- Best Practices in Prototyping

- Best Practices in Ongoing Operations

- Building a Strong Team

- About the Author

- References

- Download eBook

- All Resources

- Publications

- Customer Viewpoints

- Blog

- Glossary

- Developer Portal

- Generative AI for Business

- News

- Company

- Contact Us

- search Search

- What is Enterprise AI

- Introduction: A New Technology Stack

- Requirements of the New Enterprise AI Technology Stack

- Reference AI Software Platform

- Awash in “AI Platforms”

- “Do It Yourself” AI?

- The Gordian Knot of Structured Programming

- Cloud Vendor Tools

- C3 AI Platform: What is Model-Driven Architecture

- Platform Independence: Multi-Cloud and Polyglot Cloud Deployment

- Conclusion: A Tested, Proven AI Platform

- Enterprise AI Best Practices

- Enterprise AI Buyer’s Guide

- 10 Core Principles of Enterprise AI

- IT for Enterprise AI

- Develop AI 26X Faster on AWS

- Develop AI 18X Faster on Azure

- Enterprise AI Resources

IT for Enterprise AI

C3 AI Platform for Digital Transformation

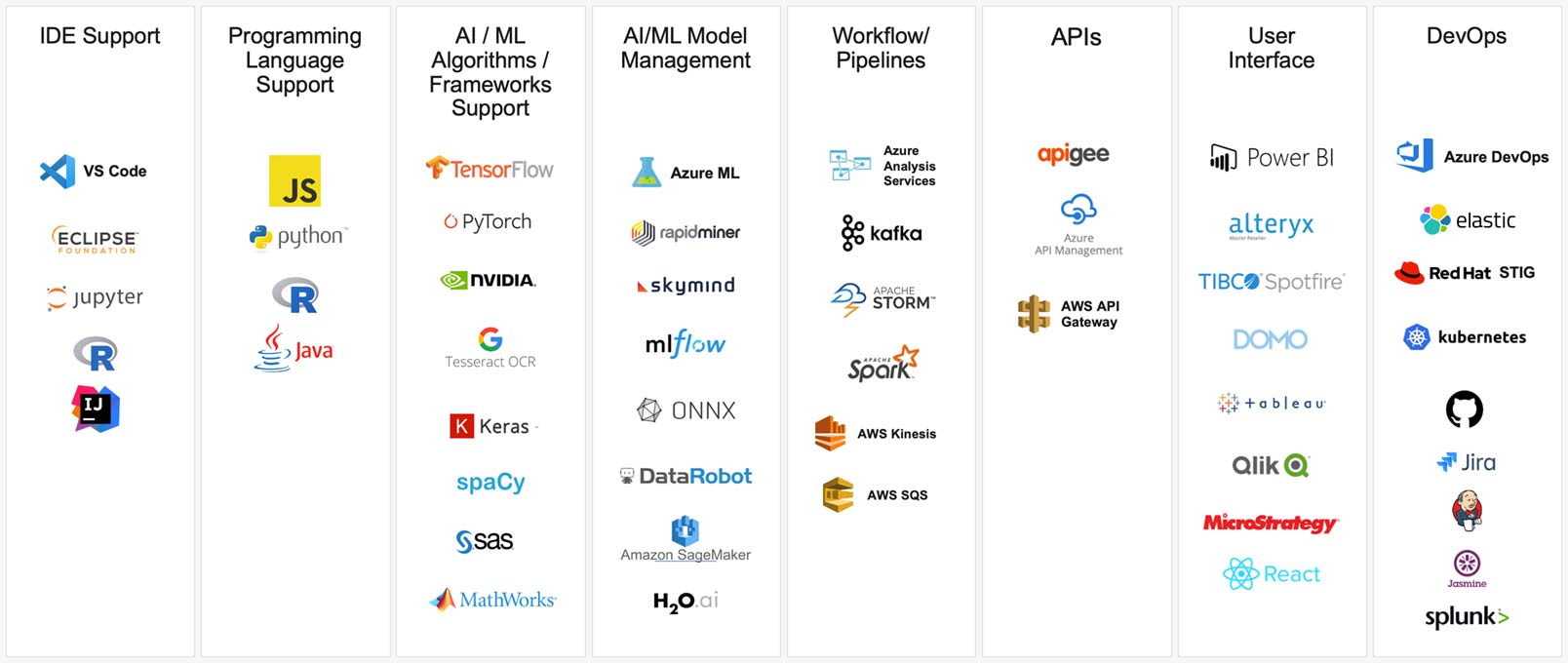

The C3 AI Platform is an open platform with plug-ins and flexibility for data scientists and developers – including IDEs and tools, programming languages, tools, DevOps capabilities, and others.

This section reviews each technical requirement and how they are satisfied through components of a next-generation application platform.

1. Data Aggregation: Unified Federated Data Image Across the Business

Process re-engineering across a company’s business requires integrating data from numerous systems and sensors into a unified federated data image, and keeping that data image current in near real-time as data changes occur.

To facilitate data integration and correlation across systems, the C3 AI Platform provides a Data Integration service with a scalable enterprise message bus. The Data Integration service provides extensible industry-specific data exchange models. Examples of data exchange models include HL7 for healthcare, eTOM for telecommunications, CIM for power utilities, PRODML and WITSML for oil & gas, and SWIFT for banking. Mapping source data systems to a common data exchange model significantly reduces the number of system interfaces required to be developed and maintained across systems. As a result, C3 AI Platform deployments with integrations to 15 to 20 source systems typically take 3–6 months as opposed to years.

Unified Federated Data Image

2. Multi-Cloud Computing and Data Persistence (Enterprise Data Lake)

Cost effectively processing and persisting large scale datasets require an elastic cloud scale-out/in architecture, with support for private cloud, public cloud, or hybrid cloud deployments.

Cloud portability is achieved through container technology on the C3 AI Platform (for example, Mesosphere). However, the platform is optimized to take advantage of differentiated services. For example, the platform takes advantage of AWS Kinesis and Azure Streams when running on AWS and Azure respectively.

Multi-Cloud operation is also supported. For example, the Platform Services can operate on AWS and invoke Google Translate or speech recognition services and access data stored on a private cloud. An instance of the Platform might need to be deployed in country on Azure Stack to conform to data sovereignty regulations.

The platform also supports installation in a customer’s virtual private cloud account (e.g., Azure or AWS account) and support for deployment in specialized clouds such as AWS GovCloud or C2S with industry or government specific security certifications.

Support for running analytics and AI predictions (or inferences) on remote gateways and edge compute devices is also required to address low-latency compute requirements or in situations where network bandwidth is constrained or intermittent (e.g., aircraft).

Data persistence of the unified data image requires a multiplicity of data stores depending on the data and anticipated access patterns. Relational databases are required to support transactions and complex queries, and key-value stores for data such as telemetry requiring low-latency reads and writes. Other stores, including distributed file systems such as HDFS, are required for support of unstructured audio or video, multi-dimensional stores, and graph stores. If a Data Lake already exists, the platform can map and access data in-place from that source system.

Infrastructure as a Service

3. Platform Services & Data Virtualization for Accessing Data In-Place

Enterprise AI applications require a comprehensive set of platform services for processing data in batches, microbatches, real-time stream data and iteratively in memory across a cluster of servers to support data scientists testing analytic features and algorithms against production scale data sets. Secure data handling is also required to ensure data is encrypted while in motion or at rest.

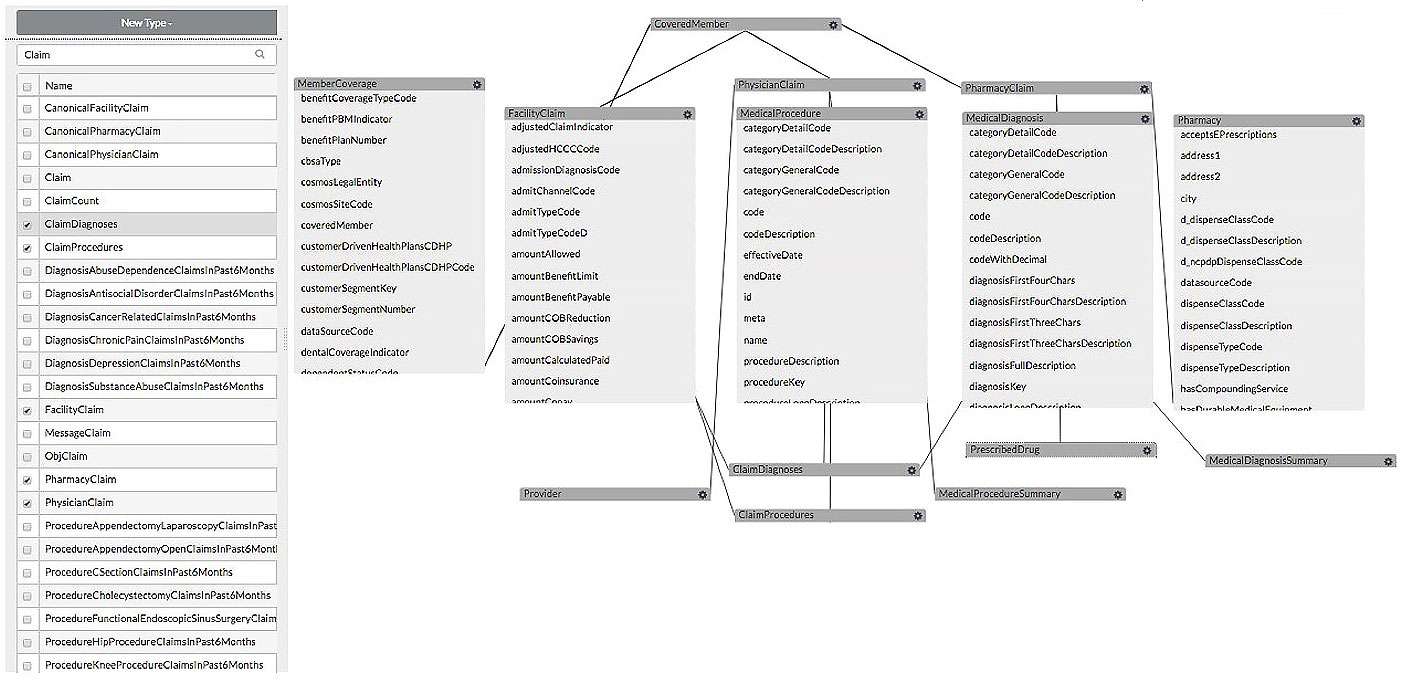

The model-driven architecture, through the C3 AI Type System, abstracts these native platform services to allow pluggability of these services without the need to alter application code.

The model-driven architecture supports data virtualization, which allows application developers to instantiate Types and manipulate data without knowledge of the underlying data stores. The platform provides support for relational stores, distributed file systems, key-value stores, graph stores as well as legacy applications and systems such as SAP, OSIsoft PI, and SCADA systems.

Platform Services

4. Enterprise Object Models and Microservices

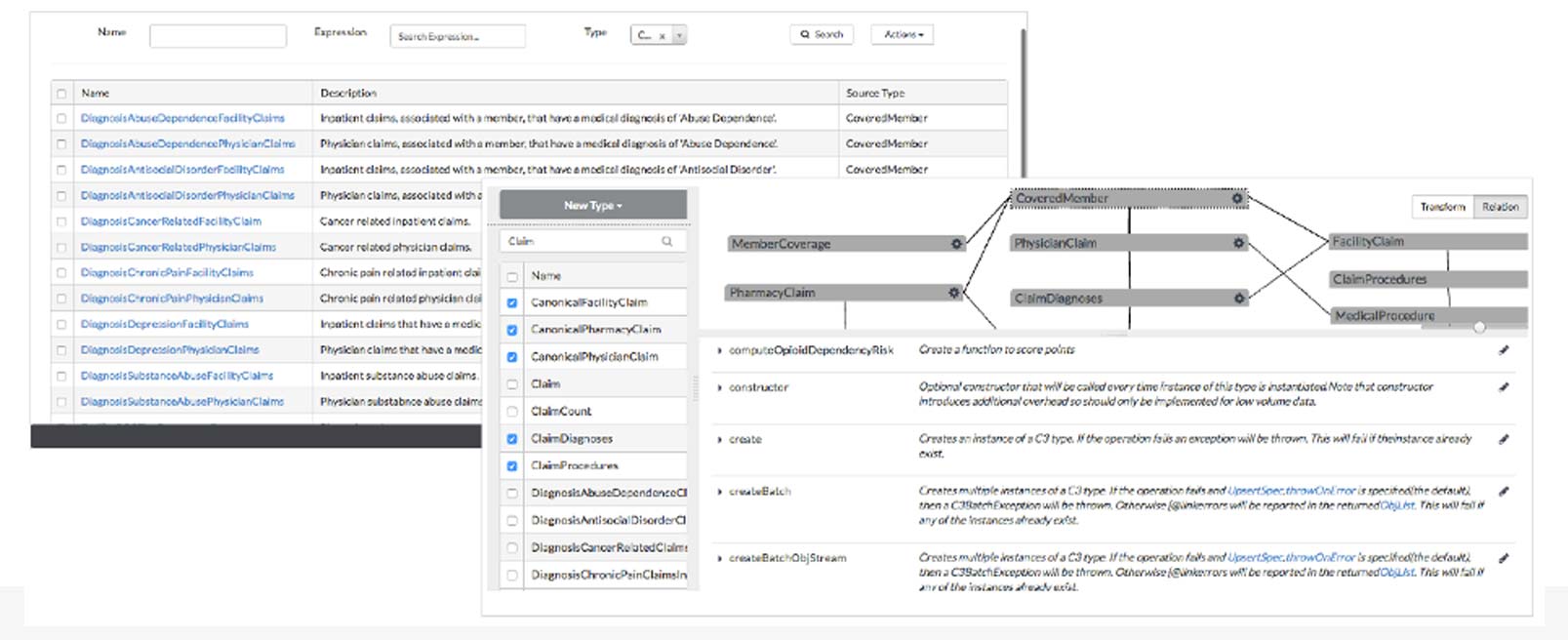

Re-engineering processes across an organization, requires a consistent object model across the enterprise. The enterprise object model represents objects and their relationships independent of the underlying persistence data formats and stores. In contrast to passive entity / object models in typical modeling tools, the object model is active and interpreted by the platform at runtime providing significant flexibility to handle object model and schema changes. Changes to the object model are versioned and immediately active without need to re-write application code.

Enterprise Object Model

Similarly, a catalog of AI microservices is published and available enterprise-wide subject to security / authorization access controls.

Enterprise AI Microservices

5. AI & Optimization

An integrated full life-cycle algorithm development experience is required for data scientists to rapidly design, develop, test and deploy machine learning and deep learning algorithms.

This allows data scientists to use the programming language of their choice – Python, R, Scala, Java – to develop and test machine learning and deep learning algorithms against a current production snapshot of all available data. This ensures that data scientists achieve the highest levels of machine learning accuracy (precision and recall).

The machine learning algorithm should be deployable in production without the effort and time required and errors introduced by translation to a different programming language.

The AI machine learning algorithms should provide APIs to programmatically trigger predictions and re-training as necessary.

AI predictions should be conditionally triggered based on the arrival of dependent data. AI predictions can trigger events and notifications or be inputs to other routines including simulations involving constraint programming.

AI Architecture

6. Data Governance & Security

The C3 AI Platform supports multi-level user authentication and authorization. Access to all Types (data objects, methods, aggregate services, ML algorithms) are subject to authorization. Authorization is dynamic and programmatically settable; for example, authorization to access data or invoke a method might be subject to the user’s ability to access specific data rows.

The platform also provides support for external security authorization services. For example, centralized consent management services in financial services and healthcare.

Governance & Security

7. Development Tools and Application Management

All C3 AI Types are accessible through standard programming languages (R, Java, JavaScript, Python) and IDEs (Eclipse, Azure Developer Tools).

A complete, easy to use set of visual tools are available to rapidly configure applications by extending the metadata repository. Meta data repository APIs are also available to synchronize the object definitions and relationships with external repositories or for introspection of available Types.

Application version control is available through synchronization with common source code repositories such as git.

C3 AI Integrated Development Studio